I’m seeing some strange reconstruction failures in heterogeneous refinement. It seems to happen when I use some ab initio reconstruction volumes and some other heterogeneous refinment volumes together as the initial references.

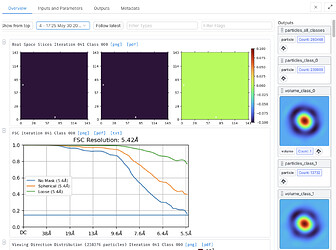

The final iteration has results like these:

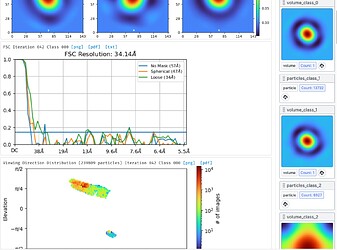

At a precedeing iteration, it seems the reconstructions have failed:

Before that one, everything looks normal. All the classes are populated. I’m still doing some tests to check but seems like it might be caused by using hard classification and different sized initial references. Will update.

Update

Seems to happen if the references are mixed from ab initio and heterogeneous refinement, and force hard classification is on. Using a previous homogeneous refinement and the ab initio classes seems to work. Different sized references + hard classification triggers it.

Assuming you are referring to the box sizes of the input references:

- How many input references are there?

- What are their box sizes?

- What is the Refinement box size (Voxels) of the Heterogeneous Refinement job?

- Does a clone of the Heterogeneous Refinement job with Force hard classification turned off behave “normally”?

Example 1 (cryoSPARC 4 beta current patch)

- 4 references (Het. ref, 3x ab initio)

- 128 vx, 64 vx, 64 vx, 64 vx

- 128 vx (data is 96 px)

- Yes

Example 2

- 5 references (Het. ref, 4x ab initio)

- 128 vx, 64 vx, 64 vx, 64 vx, 64 vx

- 192 vx (data is 192 px)

- Yes

Example 3 (Different dataset, cryoSPARC 3.3.1)

- 3 references (Homo. ref, 2x ab initio)

- 288 vx, 64 vx, 64 vx

- 144 vx (data is 288 px)

- Yes

@DanielAsarnow we’re working on reproducing this internally! Out of curiosity, is hard classification something you find useful in the hetero refine job?

I actually just started trying it. I usually use 4x Fourier cropped particles for 2D classification, discarding just the most obviously bad classes, and then a Het. refine using 1 good reference and several bad references to remove junk that isn’t really the particle. The bad references are usually a mix of finished ab initio volumes (that look like hole edges or empty micelles) and random-ish volumes with compact support made by starting and immediately killing an ab initio job. This “garbage collection” approach is actually very effective at removing non-particles from most datasets, and doesn’t require much thought or manual inspection. It’s sort of conceptually similar to phase-randomized classification. One of my friends suggested hard classification might make sense with this strategy, so that the junk doesn’t affect the good class reconstruction and the bad classes don’t take on features of the real particle.

3 Likes

I just tried this and I see the same thing - all classes go to featureless balls, whereas classification proceeds fine with “force hard classification” off, or if all input classes have been resampled to the same box size.

1 Like

To add to this (admittedly somewhat off-topic) discussion, I have also found hard classification very helpful when I have one domain that is consistently of very high quality and another which is unstable/unstructured/flexible.

I find that without hard classification, I end up with maps which all look the same — I assume since particles are contributing more-or-less equally to all classes given the large consistent domain.

However, with hard classification, I get classes that have varying quality in the less-structured domain, which I can then use for further refinement/classification.

3 Likes

Whats a guy gotta do to become beta4 tester

1 Like

I can also reproduce this error with all same-size volumes. I used volumes from 2 homo. refine jobs with the same size boxes, set the reconstruction size to 288 voxels (same as the input maps), and turned hard classification on. A clone of the job with the reconstruction size set to 144 vx completed normally.