Hi,

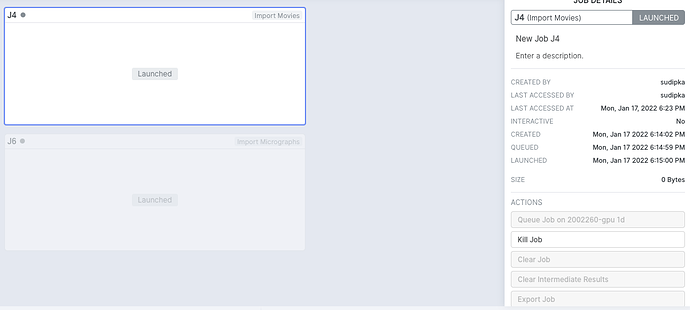

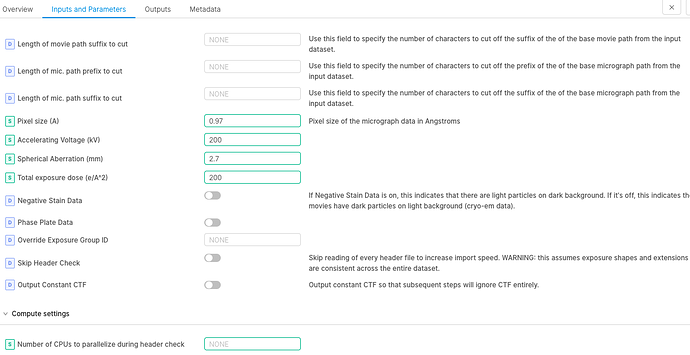

I am having issues with running jobs - it just gets lunched and never starts. It looks the installation is fine but it could be problem with the cluster configuration or submission script. Any suggestions would be really helpful. I did try running the jobs with default cpu numbers but it didn’t help.

Thanks

Sudeep

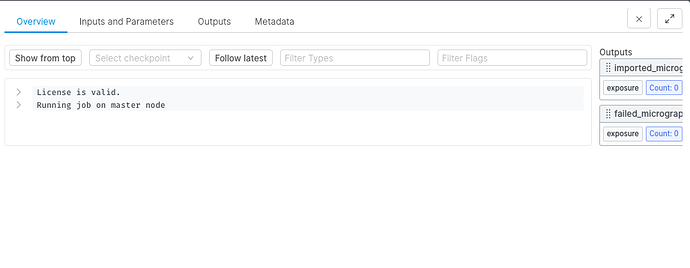

@sudeepka What does the Overview tab of a “Launched” job show?

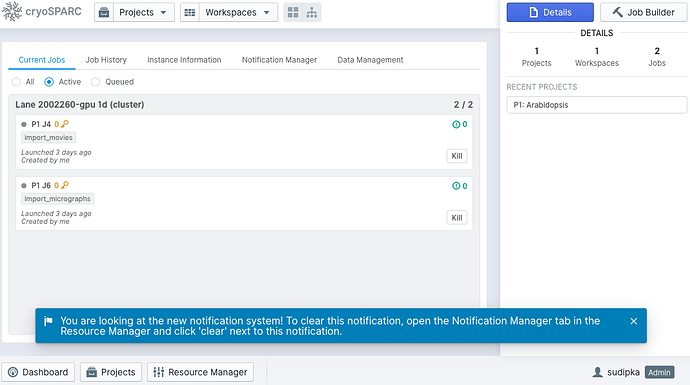

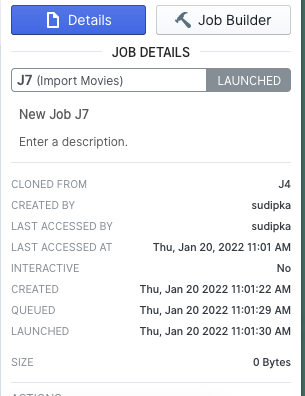

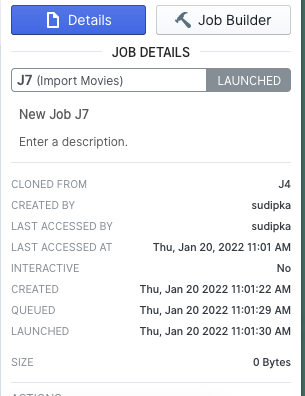

Hi @wtwmpel Here is the screenshot of the overview tab of the launched job.

Hello @sudeepka ,

did you run cryosparcm cluster connect?

Do you see Lane "something" (cluster) on the Resource Manager?

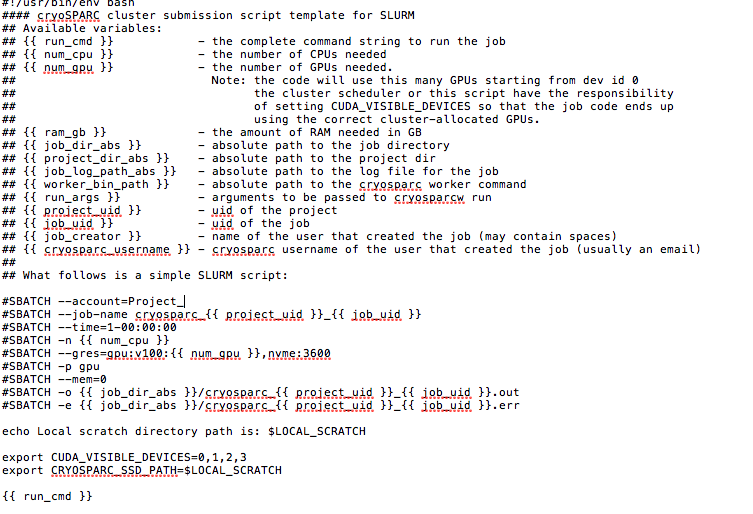

How does your cluster_info.json and cluster_script.sh look like?

Best,

Juan

@sudeepka If you haven’t yet resolved the problem, please post the output of

cryosparcm log command_core

shortly after scheduling a job, to ensure the log includes a recent record of a cluster job.

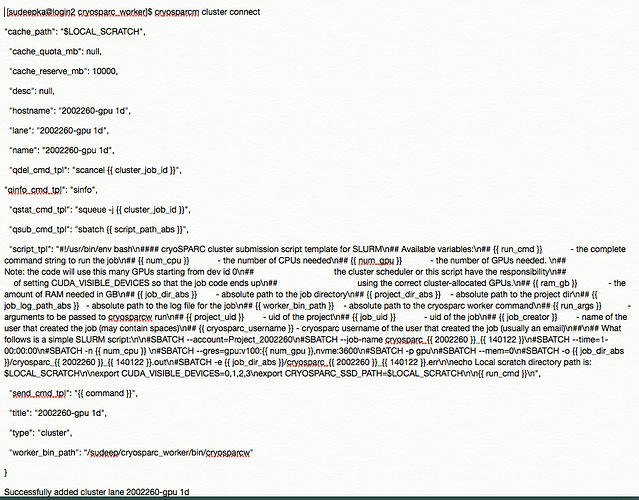

@jucastil ran the connect again and this is how it looks

Do you see Lane "something" (cluster) on the Resource Manager? -I don´t see any thing like this.

.sh I already shared in the earlier converstaion in this thread. Here is my .json file

"name" : "2002260-gpu 1d",

"worker_bin_path" : "/sudeep/cryosparc_worker/bin/cryosparcw",

"cache_path" : "$LOCAL_SCRATCH",

"send_cmd_tpl" : "{{ command }}",

"qsub_cmd_tpl" : "sbatch {{ script_path_abs }}",

"qstat_cmd_tpl" : "squeue -j {{ cluster_job_id }}",

"qdel_cmd_tpl" : "scancel {{ cluster_job_id }}",

"qinfo_cmd_tpl" : "sinfo",

"transfer_cmd_tpl" : "cp {{ src_path }} {{ dest_path }}"

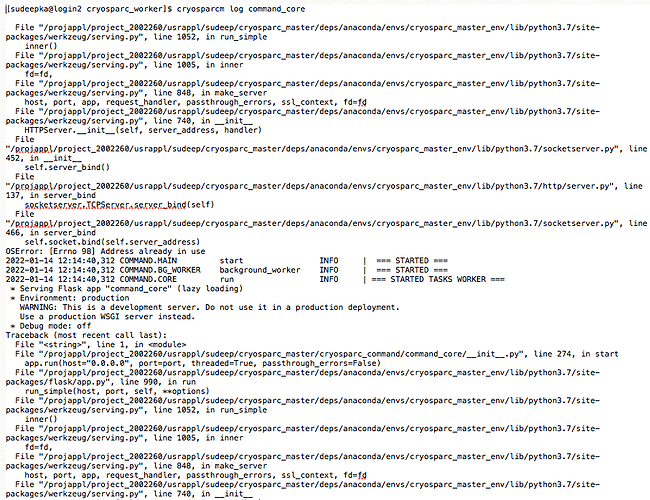

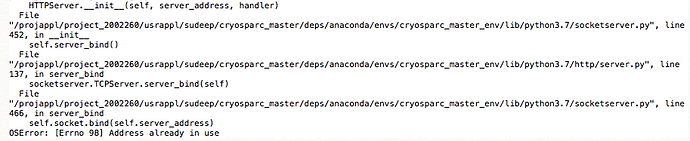

@wtempel No I have not resolved the problem yet. If I run cryosparcm log command_core I this this detail and I don´t see the recently started job there … tried few times running the command but no I don´t see the recent record. Also I see this error: OSError: [Errno 98] Address already in use. Here is the details :

Hi @sudeepka @wtempel for me looks like a communication problem (python issue)

Cryosparc tries to launch the job but the port is not open or not available…

I would try stopping the cryosparc instance and starting it again pointing to a different port…

@sudeepka Is there another cryoSPARC master instance running on the same computer, perhaps installed by another user and relying on the same range of network ports?

Multiple cryoSPARC master instances on the same computer must be carefully isolated from each other.

@wtempel Thanks again for reaching me. I managed to fix it. My master was in bad state so I killed all the ongoing jobs and restarted the cryosparcm and ran cryosparcm cluster connect that perfectly helped.