Hi,

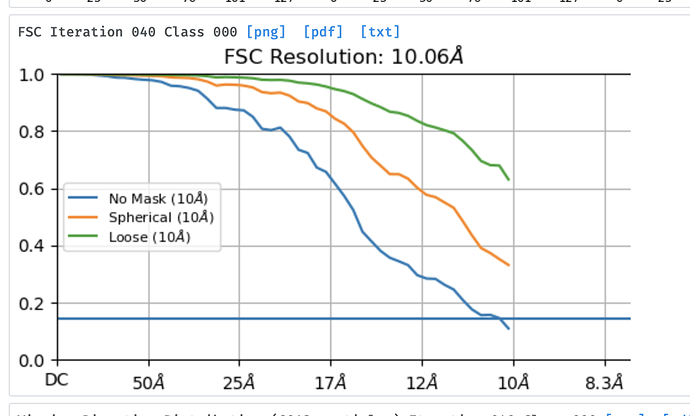

I’ve noticed that when I explicitly set a maximum alignment resolution during heterogeneous refinement, the resolution for backprojection seems to be set to the backprojection resolution factor multiplied by the maximum alignment resolution, rather than the resolution factor multiplied by the best resolution of any class in the set. E.g. see attached, where I used a max alignment resolution of 20 Å, with a backprojection resolution factor of 2.

The normal behavior resumes for the final full iterations. Is this a bug, or the intended behavior? I guess for most aspects it doesn’t really matter, but could affect the convergence criteria (as one of the convergence criteria is resolution improvement).

Cheers

Oli