Hi all,

About CTF estimation, motion correction, template picking, blob picking, are there anyways to enable ssd cache?

I have already added the ssd cache path, but after compute job submission into clusters, the jobs are always having ssd cache to be false.

I dare not to update the worker application with bin/cryosparcw connect … --update because it is a cluster not a worker node setup.

For ab-initio and refinement, I am able to enable ssd cache, though.

thanks!

Regards,

qitsweauca

Hello @qitsweauca,

If you have some kind of storage attached to the cluster (GPFS?NFS?) maybe you can speak with your administrators and ask them to reserve some cache for the nodes. Then you just need to customize your cluster_info.json with the path, something like

"cache_path": "/storage/cryosparc",

"cache_reserve_mb": 10000,

"cache_quota_mb" : 10000,

after that, of course cryosparcm cluster connect.

Best,

Juan

Hi Juan @jucastil

Thank you for your reply.

The job is sent to compute nodes and the cache is the ssd on the compute nodes; therefore, in the worker application’s config file, I also add export CRYOSPARC_SSD_PATH="$PBS_JOBFS"

for dynamic path.

Previously, cache_path is also defined in the cluster_info.json

After you pointed out all the three items, I also added “cache_reserve_mb” and “cache_quota_mb” to my PBS script. And certainly cyrosparcm cluster connect after modifications, and I even restart the entire cryoSPARC.

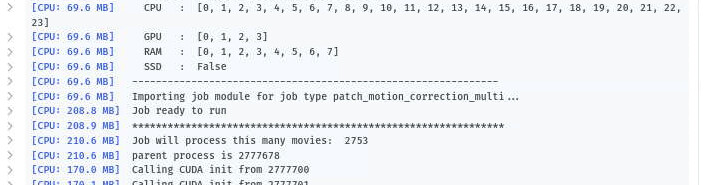

but the job submission result shown in the following screenshot is still having “SSD : False”

If this is false, it would also mean that the input files will not be loaded into the cache SSD space, but i/o read and write directly to the shared storage (Lustre filesystems) across the nodes where both master and worker applications are.

In 3D refinement and ab-initio, there are buttons for activating SSD cache “Cache particle images on SSD”, and it will successfully load input files into the cache without problems and the performance is so much better.

Regards,

qitsweauca

This is by design.

The jobtypes cited read their input files only once and do not benefit from caching to fast onboard drives in the same way as other jobtypes (e.g. classification, refinement) that access the same set of particle images several times over multiple iterations.

1 Like

Hi @leetleyang,

Thank you so much!

I didn’t notice about the details of this, and it makes sense now.

I looked up other cryosparc setup with node worker lanes, and it seems to be also not using ssd cache.

anyway thank you!

Regards,

qitsweauca