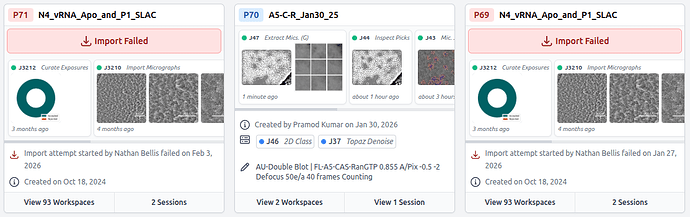

Nope, both 5.0 and 5.0.1 fail. P20 has been detached by cryosparc when update to 5.0

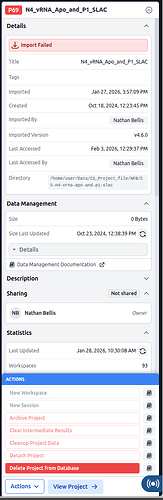

P69 is the import in 5.0

P71 is 5.0.1

Non of them work.

P69

Traceback (most recent call last):

File “/home/user/cryosparc/cryosparc_master/tools/cryosparc/api.py”, line 238, in _call

return self._handle_response(_schema, res)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “/home/user/cryosparc/cryosparc_master/tools/cryosparc/api.py”, line 158, in _handle_response

res.raise_for_status()

File “/home/user/cryosparc/cryosparc_master/.pixi/envs/master/lib/python3.12/site-packages/httpx/_models.py”, line 829, in raise_for_status

raise HTTPStatusError(message, request=request, response=self)

httpx.HTTPStatusError: Client error ‘404 Not Found’ for url ‘http://galileo:39002/projects/P20’

For more information check: 404 Not Found - HTTP | MDN

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File “”, line 198, in _run_module_as_main

File “”, line 88, in _run_code

File “/home/user/cryosparc/cryosparc_master/cli/setup_client.py”, line 28, in

result = eval(sys.argv[1], {“api”: api, “db”: db, “gfs”: gfs}, {})

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “”, line 1, in

File “/home/user/cryosparc/cryosparc_master/tools/cryosparc/api.py”, line 324, in endpoint

return namespace._call(method, path, schema, *args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “/home/user/cryosparc/cryosparc_master/tools/cryosparc/api.py”, line 240, in _call

raise APIError(“received error response”, res=err.response) from err

tools.cryosparc.errors.APIError: *** [API] (GET http://galileo:39002/projects/P20, code 404) received error response

Response data:

{

“detail”: “Project P20 not found”

}

2026-02-04 15:38:30,229 app cryosparc_except ERROR | GET http://galileo:39002/projects/P20 encountered 404 exception: Project P20 not found

2026-02-04 15:38:30,229 uvicorn.access send INFO | 127.0.0.1:34180 - “GET /projects/P20 HTTP/1.1” 404

{“id”: “697934b5e755bd1dd321fff4”, “updated_at”: “2026-02-03T18:29:37.137000Z”, “created_at”: “2024-10-18T17:23:45.901000Z”, “autodump”: false, “uid”: “P69”, “project_dir”: “/home/user/Data/CS_Project_file/NFB/CS-n4-vrna-apo-and-p1-slac”, “owner_user_id”: “665112e30ec36e01397b8dde”, “title”: “N4_vRNA_Apo_and_P1_SLAC”, “description”: “Enter a description.”, “project_params_pdef”: {}, “queue_paused”: false, “deleted”: false, “deleting”: false, “users_with_access”: [“665112e30ec36e01397b8dde”], “size”: 0, “size_last_updated”: “2024-10-23T17:38:39.921000Z”, “last_accessed”: {“name”: “nfbellis”, “accessed_at”: “2026-02-03T18:29:37.137000Z”}, “archived”: false, “detached”: false, “generate_intermediate_results_settings”: {“class_2D_new”: false, “class_3D”: false, “var_3D_disp”: false}, “last_exp_group_id_used”: 4, “imported_at”: “2026-01-27T21:57:09.495000Z”, “import_status”: “failed”, “project_stats”: {“workspace_count”: 93, “session_count”: 2, “job_count”: 2643, “job_types”: {“select_2D”: 508, “class_2D_new”: 358, “homo_reconstruct”: 315, “homo_abinit”: 256, “nonuniform_refine_new”: 231, “new_local_refine”: 162, “class_3D”: 160, “import_volumes”: 65, “volume_tools”: 61, “rtp_worker”: 50, “extract_micrographs_multi”: 50, “hetero_refine”: 48, “align_3D_new”: 31, “particle_sets”: 25, “ctf_refine_local”: 24, “curate_exposures_v2”: 23, “template_picker_gpu”: 22, “inspect_picks_v2”: 19, “remove_duplicate_particles”: 18, “create_templates”: 16, “subset_particles”: 15, “cache_particles”: 14, “extract_micrographs_cpu_parallel”: 14, “downsample_particles”: 13, “topaz_extract”: 13, “local_resolution”: 12, “volume_alignment_tools”: 12, “reference_motion_correction”: 9, “homo_refine_new”: 8, “particle_subtract”: 8, “simulator_gpu”: 7, “blob_picker_gpu”: 7, “ctf_refine_global”: 6, “rebalance_3D”: 6, “autoselect_2D”: 5, “patch_ctf_estimation_multi”: 4, “exposure_sets”: 4, “orientation_diagnostics”: 4, “rebalance_classes_2D”: 4, “topaz_train”: 4, “reference_select_2D”: 4, “patch_motion_correction_multi”: 4, “export_live_exposures”: 3, “export_live_particles”: 3, “snowflake”: 2, “live_session”: 2, “var_3D”: 2, “manual_picker_v2”: 2, “class_2D_streaming”: 2, “reconstruct_2D”: 2, “junk_detector_v1”: 1, “var_3D_disp”: 1, “local_filter”: 1, “auto_blob_picker_gpu”: 1, “import_movies”: 1, “import_micrographs”: 1}, “job_sections”: {“import”: 67, “motion_correction”: 13, “ctf_estimation”: 4, “exposure_curation”: 24, “particle_picking”: 67, “extraction”: 77, “deep_picker”: 17, “particle_curation”: 897, “reconstruction”: 256, “refinement”: 602, “ctf_refinement”: 30, “variability”: 163, “postprocessing”: 17, “local_refinement”: 170, “utilities”: 167, “simulations”: 7, “live”: 60}, “job_status”: {“completed”: 2498, “building”: 140, “killed”: 5}, “updated_at”: “2026-01-28T16:30:08.236000Z”}, “created_at_version”: “v4.6.0”, “is_cleanup_in_progress”: false, “tags”: , “starred_by”: , “autodump_failed”: false, “autodump_errors”: , “uid_num”: 69}

2026-02-02 12:43:09,836 uvicorn.access send INFO | 127.0.0.1:46796 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-02 12:43:09,877 uvicorn.access send INFO | 127.0.0.1:46808 - “POST /projects/P69%3Aview HTTP/1.1” 200

2026-02-02 12:45:37,366 uvicorn.access send INFO | 127.0.0.1:56616 - “POST /projects/P69%3Astar HTTP/1.1” 422

2026-02-02 14:11:28,476 uvicorn.access send INFO | 127.0.0.1:57962 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-02 14:11:50,778 uvicorn.access send INFO | 127.0.0.1:53516 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-02 16:56:35,397 uvicorn.access send INFO | 127.0.0.1:34088 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-02 16:56:35,423 uvicorn.access send INFO | 127.0.0.1:34096 - “POST /projects/P69%3Aview HTTP/1.1” 200

2026-02-02 16:56:41,576 uvicorn.access send INFO | 127.0.0.1:47208 - “POST /projects/P69/workspaces/W52%3Aview HTTP/1.1” 200

2026-02-02 16:56:44,197 uvicorn.access send INFO | 127.0.0.1:47216 - “POST /projects/P69/workspaces/W30/jobs/J1063%3Aview HTTP/1.1” 200

2026-02-02 16:56:44,252 uvicorn.access send INFO | 127.0.0.1:47218 - “POST /projects/P69/workspaces/W30/jobs/J1063%3Aview HTTP/1.1” 200

2026-02-02 16:56:47,538 uvicorn.access send INFO | 127.0.0.1:47220 - “POST /projects/P69/workspaces/W52%3Aview HTTP/1.1” 200

2026-02-02 16:56:47,954 uvicorn.access send INFO | 127.0.0.1:55164 - “POST /projects/P69%3Aview HTTP/1.1” 200

2026-02-02 16:56:55,055 uvicorn.access send INFO | 127.0.0.1:55168 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-02 16:57:58,784 uvicorn.access send INFO | 127.0.0.1:38288 - “POST /projects/P69%3Aview HTTP/1.1” 200

2026-02-02 21:02:57,722 uvicorn.access send INFO | 127.0.0.1:55364 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-02 21:02:57,740 uvicorn.access send INFO | 127.0.0.1:55374 - “POST /projects/P69%3Aview HTTP/1.1” 200

2026-02-02 21:03:40,471 uvicorn.access send INFO | 127.0.0.1:48590 - “GET /projects/P69%3Apreview_delete HTTP/1.1” 200

2026-02-02 21:45:05,910 uvicorn.access send INFO | 127.0.0.1:52438 - “POST /projects/P69%3Aview HTTP/1.1” 200

2026-02-02 21:45:06,088 uvicorn.access send INFO | 127.0.0.1:52446 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-02 21:46:03,668 uvicorn.access send INFO | 127.0.0.1:38750 - “GET /projects/P69%3Apreview_delete HTTP/1.1” 200

2026-02-02 21:50:12,887 uvicorn.access send INFO | 127.0.0.1:43226 - “POST /projects/P69%3Aview HTTP/1.1” 200

2026-02-03 12:29:37,119 uvicorn.access send INFO | 127.0.0.1:42746 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-03 12:29:37,150 uvicorn.access send INFO | 127.0.0.1:42748 - “POST /projects/P69%3Aview HTTP/1.1” 200

2026-02-03 14:30:19,946 uvicorn.access send INFO | 127.0.0.1:56352 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-03 14:30:34,924 uvicorn.access send INFO | 127.0.0.1:56366 - “GET /projects/P69%3Apreview_delete HTTP/1.1” 200

2026-02-03 15:14:47,610 uvicorn.access send INFO | 127.0.0.1:42542 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-03 15:42:52,432 uvicorn.access send INFO | 127.0.0.1:59086 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-03 15:52:29,972 uvicorn.access send INFO | 127.0.0.1:44432 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-04 15:35:18,268 uvicorn.access send INFO | 127.0.0.1:38782 - “GET /projects/P69/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-04 15:38:33,105 uvicorn.access send INFO | 127.0.0.1:34192 - “GET /projects/P69 HTTP/1.1” 200

P71

Traceback (most recent call last):

File “/home/user/cryosparc/cryosparc_master/tools/cryosparc/api.py”, line 238, in _call

return self._handle_response(_schema, res)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “/home/user/cryosparc/cryosparc_master/tools/cryosparc/api.py”, line 158, in _handle_response

res.raise_for_status()

File “/home/user/cryosparc/cryosparc_master/.pixi/envs/master/lib/python3.12/site-packages/httpx/_models.py”, line 829, in raise_for_status

raise HTTPStatusError(message, request=request, response=self)

httpx.HTTPStatusError: Client error ‘404 Not Found’ for url ‘http://galileo:39002/projects/P20’

For more information check: 404 Not Found - HTTP | MDN

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File “”, line 198, in _run_module_as_main

File “”, line 88, in _run_code

File “/home/user/cryosparc/cryosparc_master/cli/setup_client.py”, line 28, in

result = eval(sys.argv[1], {“api”: api, “db”: db, “gfs”: gfs}, {})

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “”, line 1, in

File “/home/user/cryosparc/cryosparc_master/tools/cryosparc/api.py”, line 324, in endpoint

return namespace._call(method, path, schema, *args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “/home/user/cryosparc/cryosparc_master/tools/cryosparc/api.py”, line 240, in _call

raise APIError(“received error response”, res=err.response) from err

tools.cryosparc.errors.APIError: *** [API] (GET http://galileo:39002/projects/P20, code 404) received error response

Response data:

{

“detail”: “Project P20 not found”

}

2026-02-04 15:38:30,229 app cryosparc_except ERROR | GET http://galileo:39002/projects/P20 encountered 404 exception: Project P20 not found

2026-02-04 15:38:30,229 uvicorn.access send INFO | 127.0.0.1:34180 - “GET /projects/P20 HTTP/1.1” 404

2026-02-04 15:39:34,899 app cryosparc_except ERROR | GET http://galileo:39002/projects/P20 encountered 404 exception: Project P20 not found

2026-02-04 15:39:34,900 uvicorn.access send INFO | 127.0.0.1:42460 - “GET /projects/P20 HTTP/1.1” 404

{“id”: “69823b4881a30ded419251fc”, “updated_at”: “2026-02-03T18:55:39.401000Z”, “created_at”: “2024-10-18T17:23:45.901000Z”, “autodump”: false, “uid”: “P71”, “project_dir”: “/home/user/Data/CS_Project_file/NFB/CS-n4-vrna-apo-and-p1-slac”, “owner_user_id”: “665112e30ec36e01397b8dde”, “title”: “N4_vRNA_Apo_and_P1_SLAC”, “description”: “Enter a description.”, “project_params_pdef”: {}, “queue_paused”: false, “deleted”: false, “deleting”: false, “users_with_access”: [“665112e30ec36e01397b8dde”], “size”: 0, “size_last_updated”: “2024-10-23T17:38:39.921000Z”, “last_accessed”: {“name”: “nfbellis”, “accessed_at”: “2026-02-03T18:55:39.401000Z”}, “archived”: false, “detached”: false, “generate_intermediate_results_settings”: {“class_2D_new”: false, “class_3D”: false, “var_3D_disp”: false}, “last_exp_group_id_used”: 4, “imported_at”: “2026-02-03T18:15:36.536000Z”, “import_status”: “failed”, “project_stats”: {“workspace_count”: 93, “session_count”: 2, “job_count”: 2643, “job_types”: {“select_2D”: 508, “class_2D_new”: 358, “homo_reconstruct”: 315, “homo_abinit”: 256, “nonuniform_refine_new”: 231, “new_local_refine”: 162, “class_3D”: 160, “import_volumes”: 65, “volume_tools”: 61, “extract_micrographs_multi”: 50, “rtp_worker”: 50, “hetero_refine”: 48, “align_3D_new”: 31, “particle_sets”: 25, “ctf_refine_local”: 24, “curate_exposures_v2”: 23, “template_picker_gpu”: 22, “inspect_picks_v2”: 19, “remove_duplicate_particles”: 18, “create_templates”: 16, “subset_particles”: 15, “cache_particles”: 14, “extract_micrographs_cpu_parallel”: 14, “downsample_particles”: 13, “topaz_extract”: 13, “volume_alignment_tools”: 12, “local_resolution”: 12, “reference_motion_correction”: 9, “homo_refine_new”: 8, “particle_subtract”: 8, “simulator_gpu”: 7, “blob_picker_gpu”: 7, “ctf_refine_global”: 6, “rebalance_3D”: 6, “autoselect_2D”: 5, “reference_select_2D”: 4, “exposure_sets”: 4, “orientation_diagnostics”: 4, “patch_ctf_estimation_multi”: 4, “patch_motion_correction_multi”: 4, “rebalance_classes_2D”: 4, “topaz_train”: 4, “export_live_exposures”: 3, “export_live_particles”: 3, “snowflake”: 2, “reconstruct_2D”: 2, “manual_picker_v2”: 2, “var_3D”: 2, “live_session”: 2, “class_2D_streaming”: 2, “junk_detector_v1”: 1, “import_micrographs”: 1, “var_3D_disp”: 1, “auto_blob_picker_gpu”: 1, “import_movies”: 1, “local_filter”: 1}, “job_sections”: {“import”: 67, “motion_correction”: 13, “ctf_estimation”: 4, “exposure_curation”: 24, “particle_picking”: 67, “extraction”: 77, “deep_picker”: 17, “particle_curation”: 897, “reconstruction”: 256, “refinement”: 602, “ctf_refinement”: 30, “variability”: 163, “postprocessing”: 17, “local_refinement”: 170, “utilities”: 167, “simulations”: 7, “live”: 60}, “job_status”: {“completed”: 2498, “building”: 140, “killed”: 5}, “updated_at”: “2026-02-03T21:14:26.099000Z”}, “created_at_version”: “v4.6.0”, “is_cleanup_in_progress”: false, “tags”: , “starred_by”: , “autodump_failed”: false, “autodump_errors”: , “uid_num”: 71}

2026-02-03 12:54:12,545 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J985 (Homogeneous Reconstruction Only)

2026-02-03 12:54:12,560 core.data_management _update_imported INFO | [IMPORT] Updated Job P71-J986 (3D Classification) asset references

2026-02-03 12:54:12,571 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J987 (Non-uniform Refinement)

2026-02-03 12:54:12,587 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J988 (Non-uniform Refinement)

2026-02-03 12:54:12,605 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J989 (3D Classification)

2026-02-03 12:54:12,619 core.data_management _update_imported INFO | [IMPORT] Updated Job P71-J99 (Live Preprocessing Worker) asset references

2026-02-03 12:54:15,478 core.data_management _update_imported INFO | [IMPORT] Updated Job P71-J990 (Local Refinement) asset references

2026-02-03 12:54:15,486 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J991 (Volume Tools)

2026-02-03 12:54:15,495 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J992 (Local Refinement)

2026-02-03 12:54:15,509 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J993 (Ab-Initio Reconstruction)

2026-02-03 12:54:15,520 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J994 (Homogeneous Reconstruction Only)

2026-02-03 12:54:15,533 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J995 (Homogeneous Reconstruction Only)

2026-02-03 12:54:15,547 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J996 (Homogeneous Reconstruction Only)

2026-02-03 12:54:15,561 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J997 (Non-uniform Refinement)

2026-02-03 12:54:15,579 core.data_management _update_imported INFO | [IMPORT] Updated Job P71-J998 (3D Classification) asset references

2026-02-03 12:54:15,589 core.data_management attach_jobs_data ERROR | Error importing job data for Job P71-J999 (Select 2D Classes)

2026-02-03 12:54:16,557 core.data_management attach_project_d ERROR | Not all job data could be loaded into Project P71, 766 failed to load.

2026-02-03 12:54:16,557 core.data_management attach_project_d ERROR | Not all jobs in the manifest were loaded into Project P71, 1 failed to load.

2026-02-03 12:55:22,414 uvicorn.access send INFO | 127.0.0.1:39914 - “GET /projects/P71/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-03 12:55:22,485 uvicorn.access send INFO | 127.0.0.1:39924 - “POST /projects/P71%3Aview HTTP/1.1” 200

2026-02-03 12:55:23,559 uvicorn.access send INFO | 127.0.0.1:39926 - “GET /projects/P71/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-03 12:55:23,593 uvicorn.access send INFO | 127.0.0.1:39934 - “POST /projects/P71%3Aview HTTP/1.1” 200

2026-02-03 12:55:37,780 uvicorn.access send INFO | 127.0.0.1:35286 - “POST /projects/P71%3Aview HTTP/1.1” 200

2026-02-03 12:55:39,411 uvicorn.access send INFO | 127.0.0.1:35296 - “POST /projects/P71%3Aview HTTP/1.1” 200

2026-02-03 14:29:06,616 uvicorn.access send INFO | 127.0.0.1:58334 - “GET /projects/P71/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-03 14:37:35,337 uvicorn.access send INFO | 127.0.0.1:32914 - “POST /projects/P71/workspaces/W74%3Aview HTTP/1.1” 200

2026-02-03 15:14:28,517 uvicorn.access send INFO | 127.0.0.1:51724 - “GET /projects/P71/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-03 15:14:33,626 uvicorn.access send INFO | 127.0.0.1:59358 - “POST /projects/P71/workspaces/W74%3Aview HTTP/1.1” 200

2026-02-03 15:37:59,918 uvicorn.access send INFO | 127.0.0.1:39296 - “GET /projects/P71/generate_intermediate_results_settings HTTP/1.1” 200

2026-02-04 15:39:37,769 uvicorn.access send INFO | 127.0.0.1:42462 - “GET /projects/P71 HTTP/1.1” 200