Hi,

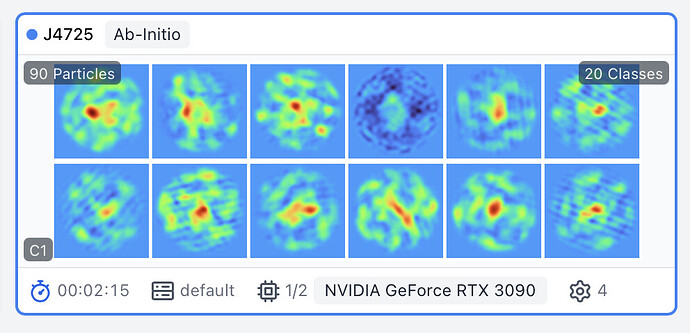

As the number of classes in ab inito reconstruction increases, linear artefacts in ab initio classes become increasingly prominent, to the point where >12 classes result in unusable results, even if there are more than enough particles available per class. Is this a known limitation of the algorithm?

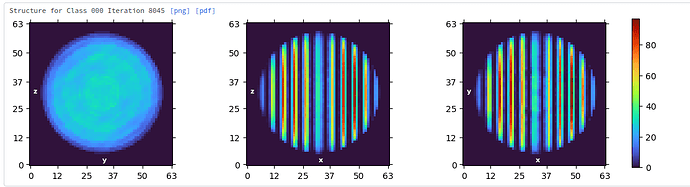

I wonder if this has something to do with the way ab initio is initialized? I know it is supposed to start from random density, and indeed the initial volume slices in the log look random, but the volume slices in the job card seem to have some strong linear artefacts superimposed. It almost seems lke the initialization happens in two steps? Perhaps the second step is assignment of a fixed number (90?) of particles to the random density classes, and recalculation of these classes somehow?

This effect is particularly noticeable when I switch off “enforce non-negativity”, a parameter change that can help fix some failure modes of ab initio (e.g. “pancaking” of high symmetry entities in C1).

Cheers

Oli

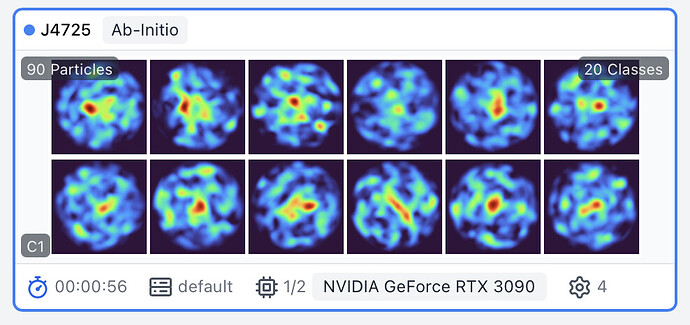

12 classes, it100:

20 classes, it100:

random initialization step1, enforce non-negativity off, 20 classes:

random initialization step2, enforce non-negativity off, 20 classes