Hello,

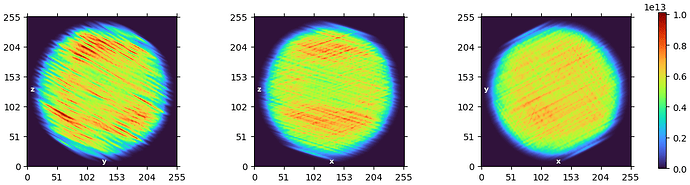

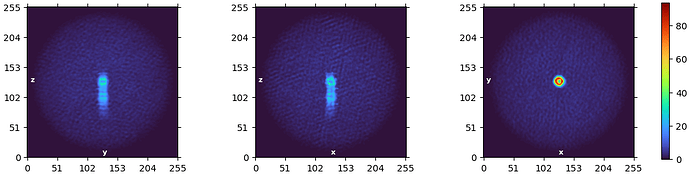

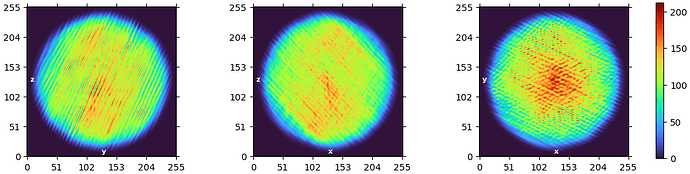

I’m having trouble processing a set of long stacked particles. I’ve attached some representative 2D classes. No matter what I try, I haven’t been able to get a reasonable ab initio reconstruction. I’ve looked through many posts from users with similar issues, but I’m still stuck. I’m working in CryoSPARC v4.7.1.

At first, the ab initio job failed with this error:

ValueError: Detected NaN values in engine.compute_error.

41783040 NaNs in total, 90 particles with NaNs.

I checked for corrupt particles, but none were found.

Following suggestions from the forum, I changed a few settings. I set the Noise model (white, symmetric or coloured) to white, and I turned off both Enforce non-negativity and Center structures in real space.

After doing that, the job failed with the following assertion error:

Traceback (most recent call last):

File "cryosparc_master/cryosparc_compute/run.py", line 129, in cryosparc_master.cryosparc_compute.run.main

File "cryosparc_master/cryosparc_compute/jobs/abinit/run.py", line 302, in cryosparc_master.cryosparc_compute.jobs.abinit.run.run_homo_abinit

File "/cluster/software/cryosparc/cryosparc-durielab/v4.7.1/cryosparc_worker/cryosparc_compute/noise_model.py", line 119, in get_noise_estimate

assert n.all(n.isfinite(ret))

AssertionError

For context, I have close to 20,000 particles in this ab initio job. During the upstream 2D classification, I used a circular mask of 800 Å. The particles were extracted in a 2000 pixel box and downsampled to 500 pixels. I used the template picker upstream. I am happy to share more information about any upstream jobs if needed. Thank you!